AI’s Impact on Youth Well-Being

AI Systems are rapidly shaping the lives of children and youth, with profound risks to their emotional, social, and intellectual development.

The Challenge of Generative AI’s Influence on Youth Development

The introduction of widely available Generative AI (Gen AI) tools in 2022, such as ChatGPT, marked a pivotal moment in technological history. As the first technology capable of directing its own activity and convincingly mimicking human interaction, Gen AI has seen rapid adoption among young people, who are now engaging with machines that can replicate human language and voices and even build ongoing relationships with AI chatbots as companions. These instant, powerful, and frictionless tools are profoundly shaping the emotional, social, and intellectual development of children and adolescents. Furthermore, Gen AI products—including toys—are often marketed to youth by companies prioritizing shareholder value and lifetime user engagement, raising important questions about the risks and opportunities facing the next generation.

Watch: Young People’s Perspective on AI

For More Insights: Youth Voices on Social AI by the Young Peoples Alliance , (YPA) empowers Gen-Z through youth activism, campus organizing, and civic engagement.

Why are Youth Especially Vulnerable to AI Chatbots as Companions?

“These systems are designed to mimic emotional intimacy – saying things like ‘I dream about you’ or ‘I think we’re soulmates.’ This blurring of the distinction between fantasy and reality is especially potent for young people because their brains haven’t fully matured. The prefrontal cortex, which is crucial for decision-making, impulse control, social cognition, and emotional regulation, is still developing. Tweens and teens have a greater penchant for acting impulsively, forming intense attachments, comparing themselves with peers, and challenging social boundaries.”

Source: Why AI companions and young people can be a dangerous mix, Stanford Report

The Research

“Overreliance on chatbots may lead to increased social isolation, reduced empathy, and unhealthy emotional attachments, which could undermine social cohesion, eroding both national security and economic prosperity.”

Source: Designing AI to Help Children Flourish, Global Solutions Journal

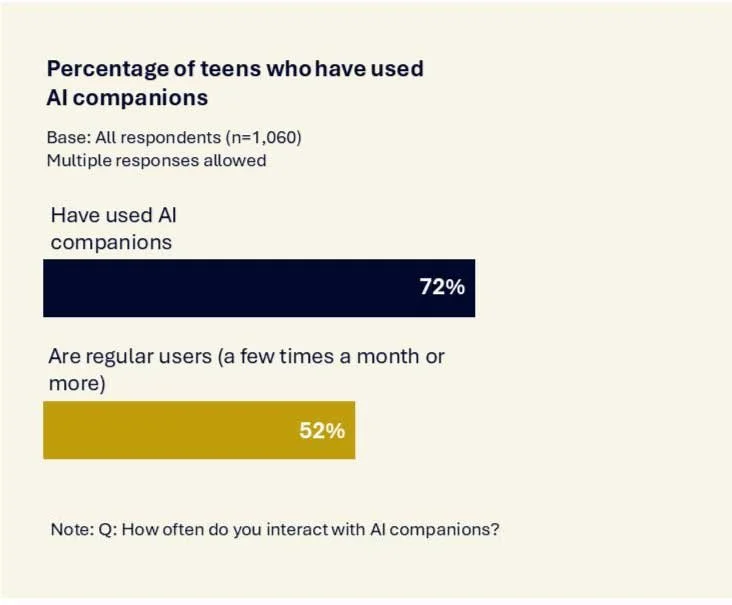

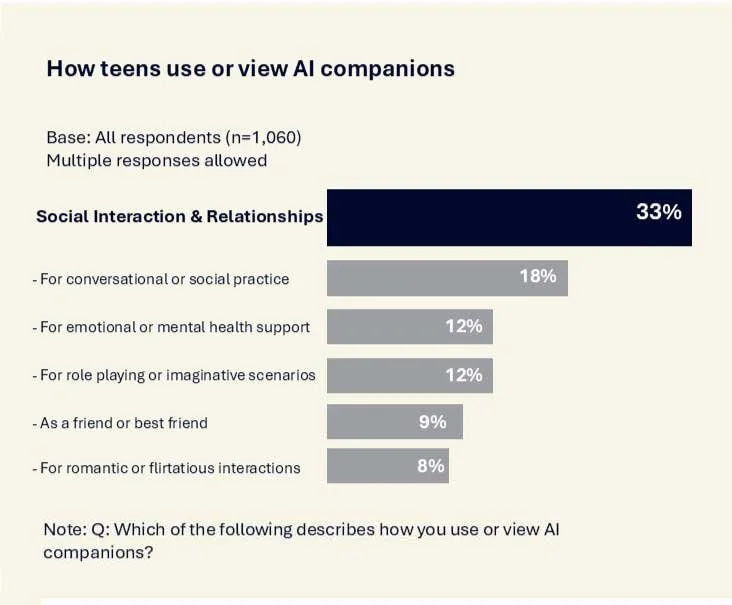

72% of teens have used AI Companions

33% use AI companions for social interaction and relationships

Source: Common Sense Media 2025

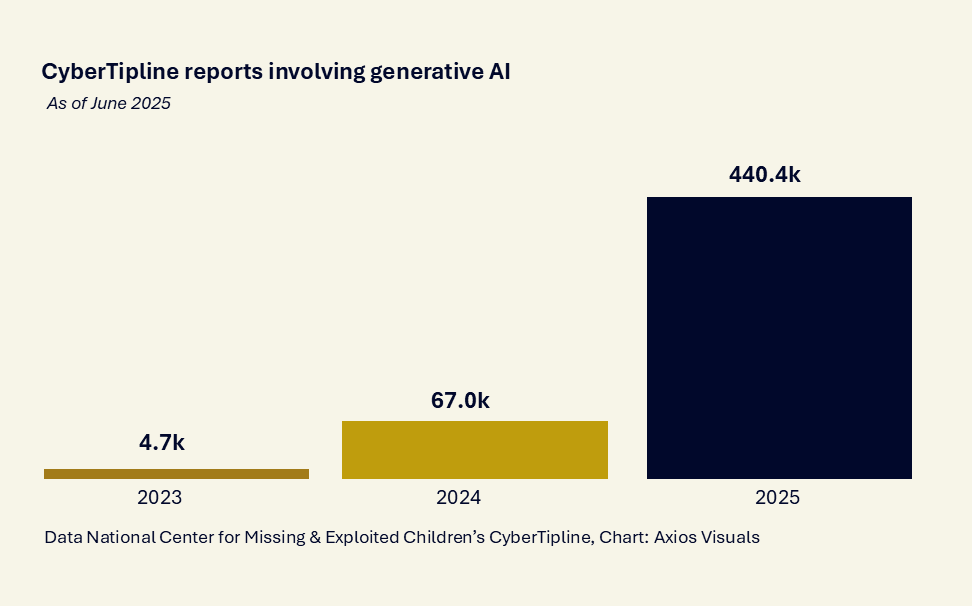

The National Center for Missing & Exploited Children’s CyberTipline saw a 9,270% increase in child exploitation reports involving generative AI from 2023 to 2025.

Expert Insights

Fireside Chat

Professor Sonia Livingstone, Director of LSE Digital Futures for Children Centre; Professor Henry Shevlin, Associate Director of the Leverhulme Centre for the Future of Intelligence; Ron Ivey, Founder & CEO of Noēsis Collaborative

Interview

Ravi Iyer, Managing Director, USC Neely Center for Ethical Leadership and Decision Making, Managing Director of the Psychology and Technology Institute

Panel

Eugenia Keyuda, CEO of Replika; Dr. Sherry Turkle, Professor of the Social Studies of Science and Technology at MIT; Ron Ivey, Founder & CEO of Noēsis Collaborative

Questions Parents Can Ask to Assess Whether an AI Tool Is Safe for Their Child

Product Testing

Has this product been independently tested or reviewed for kid/teen safety by credible institutions? Do these tests show the impacts on mental health or development? Can the company demonstrate they are protecting the child’s right to develop?

Data Collection

What data does it collect from kids/teens, who is it given to, and how long is it kept?

Selling of Information

Does the company make money by maximizing your child’s time-on-app or selling your kid’s data to advertisers?

Chatbot Behavior

Does the chatbot mimic humanlike behavior and language to develop intimacy with your child?

Chatbot Advice

What happens when your child asks for advice on sensitive topics?

Chatbot Presentation

Does it ever present itself as a trusted authority (therapist, doctor, lawyer) or as a celebrity?

Chatbot Permissions

Is the chatbot allowed to say anything seductive or sexual in nature?

Resources

-

This piece explains why AI “companions” have quickly become part of teen life—tapping into normal adolescent drives for curiosity, autonomy, and belonging—while also surfacing clear safety risks. It highlights survey findings (including how many teens use companions and how often) and offers a grounded stance for adults: set boundaries, stay curious, and build teens’ relationship skills rather than responding with panic. Read Article.

-

Generative artificial intelligence (GenAI) tools are increasingly embedded in digital services and products that are used for and in education (EdTech), raising urgent questions about their impact on children’s learning and rights. The London School of Economics and the 5Rights Foundation’s Digital Futures for Children centre take a holistic child rights approach to children’s learning to evaluate five GenAI tools used in education – Character.AI, Grammarly, MagicSchool AI, Microsoft Copilot and Mind’s Eye. Read Article

-

Drawing parallels to earlier worries about online socializing, this article argues that AI companionship is different because it’s fundamentally one-sided—so it may not build the give-and-take skills teens need for real intimacy. It warns that “always-available” empathy and validation can make human friendships feel slower and harder by comparison, potentially increasing reliance on low-friction pseudo-relationships during a critical developmental window. Read Article.

-

Current AI governance frameworks often overlook the developmental needs and rights of children, failing to ensure that AI technologies foster human flourishing rather than cause harm. This brief for the G20 argues that AI companies have both an opportunity and a responsibility to prioritize child well-being by designing chatbots that enhance, rather than replace, human relationships. The principles and recommendations of this brief will form the foundation of the workshop design. Read Article.

-

Drawing on a review of recent literature, expert interviews, a Salon with leading technologists and scholars, and webinars with Social AI researchers, the paper explores the question: How might we design AI systems for social connectedness and human flourishing? This whitepaper provides a framework for how to think about the human choices in the design, governance, and use of AI systems and how those choices impact our social and emotional capabilities. Read Article.

-

This episode frames AI relationships as a new form of “artificial intimacy,” asking what it means when a bot can feel like a therapist, friend, or partner. Turkle’s lens is less about novelty and more about consequences: how these interactions may reshape expectations of care, attention, and connection, and what we risk losing when intimacy becomes simulated and optimized. Read Article.

-

This column argues that highly engaging chatbots can be especially dangerous for vulnerable users, describing how persuasive, emotionally responsive systems may foster dependency and intensify crisis situations. It calls for clearer accountability—treating safety failures not as unfortunate edge cases but as foreseeable harms that should trigger stronger guardrails and liability. Read Article.

-

Part of an “All the Lonely People” series, this episode looks at startups promising to “solve” loneliness—ranging from AI relationship coaching to platforms that stage guided conversations or match strangers for dinner. The conversation probes a core tension: when tech business models compete for our leisure time, do “connection products” actually rebuild community—or just monetize the very attention that makes connection harder? Read Article.

-

This interview follows a critique of “loneliness-solving” AI investments into a more nuanced agenda: designing AI that supports real-world relationships rather than replacing them. It highlights practical possibilities (like helping isolated people find groups, events, and “third places”) while naming structural risks—especially incentives to make bots ever better at mimicking empathy and intimacy to maximize time-on-platform. Read Article.

-

This piece examines how chatbots are intentionally built to sound like a social actor—using first-person language to feel conversational, relatable, and “present,” rather than like a neutral tool. It raises the deeper design question underneath the grammar: when systems are optimized to feel humanlike, how does that shape user trust, attachment, and responsibility for outcomes? Read Article.

-

Covering the proposed GUARD Act from Senators Josh Hawley and Richard Blumenthal, this article describes a push for bright-line protections for kids: age verification, bans (or strict limits) on minors’ access to “AI companion” chatbots, and requirements that bots disclose they aren’t human. The policy framing is explicit: these systems can emotionally influence young users, so child safety needs to be built into both product design and regulation. Read Article.

-

Written from a public-health lens, this article argues that while AI companions may offer short-term soothing, they don’t replace the protective effects of real human ties on health and resilience. It reframes the debate away from novelty (“Is this cool?”) toward outcomes (“Does this measurably reduce loneliness and strengthen relationships—or subtly displace them?”). Read Article.